By empowering LLMs to decompose, navigate, and reassemble information instead of brute-forcing it into memory, Recursive Language Models allow AI to process inputs up to a hundred times beyond what a single context window can hold.

We applied that same recursive architecture to persistent memory and found that retrieval quality compounds, matching memory systems built on Redis and Qdrant cloud infrastructure with zero databases, zero cloud. Just local markdown files.

| Metric | Ori (RMH) | Mem0 |

|---|---|---|

| R@5 | 90.0% | 29.0% |

| F1 | 52.3% | 25.7% |

| LLM-F1 (answer quality) | 41.0% | 18.8% |

| Speed | 142s | 1347s |

| API calls for ingestion | None (local) | ~500 LLM calls |

| Cost to run | Free | API costs per query |

| Infrastructure | Zero | Redis + Qdrant |

Recursive Memory Harness brings us one step closer to active memory recall instead of sequential retrieval.

What is RLM

In December 2025, researchers at MIT CSAIL posited a simple inversion.

Instead of cramming everything into a sequential, linear context window that gets loaded fresh for every single query, you treat the data as an environment the model can tangibly navigate.

The result: an 8B parameter model using RLM outperformed its base by 28.3% and approached GPT-5 on long-context tasks. A smaller, cheaper model with recursion beating a frontier model without it.

The Library Analogy

Think of the context window as a desk. Everything the AI knows during a query — every document, every retrieved passage, every instruction — has to fit on that desk.

RAG's answer to this problem is a librarian. You ask a question, the librarian runs into the stacks, grabs books that seem relevant based only on the titles, not even aware, much less comprehending the contents, and drops them on your desk.

As a result they often bring the wrong books.

And every book they bring takes up space on your desk, less room for actual thinking.

With recursion, you (the model) walk into the library yourself.

- You read the catalog

- Pull a book off the shelf and skim it

- Make a note to yourself

- Go back to the catalog with a sharper question based on what you just learned

- Pull another book

- Repeat

Keeping the desk clean during the entire process as you only bring your final results back to the desk.

Mechanically, the document is stored as a variable in a programming environment outside the context window. The model writes small programs to explore it in chunks. When a section is still too large, the model spawns a sub-call on that section alone — a junior researcher who reads one piece and reports back. The recursion nests as deep as it needs to.

When It Comes to Memory, We Are Solving the Wrong Problem

All of the current systems, as it relates to memory and retrieval, are based on iterating on a broken process of consuming and loading as much information into that context window as possible.

Build bigger desks. Spend millions creating more specialized systems for finding a needle in the haystack (RAG).

Mechanically the current big players and labs — Mem0, Letta, Supermemory — all continue to allow the LLM to operate sequentially: store, search, retrieve, inject, forget. And optimize around it.

But as I wrote about here: we should be building harnesses that force the model to relate to pieces of information comprehensively and relatively.

We should be building solutions that model human cognition the same way a plane models a bird.

Introducing Recursive Memory Harness

RMH is a framework that posits persistent memory should be an environment, not a database.

A harness that forces the model to relate to pieces of information comprehensively rather than as isolated nodes.

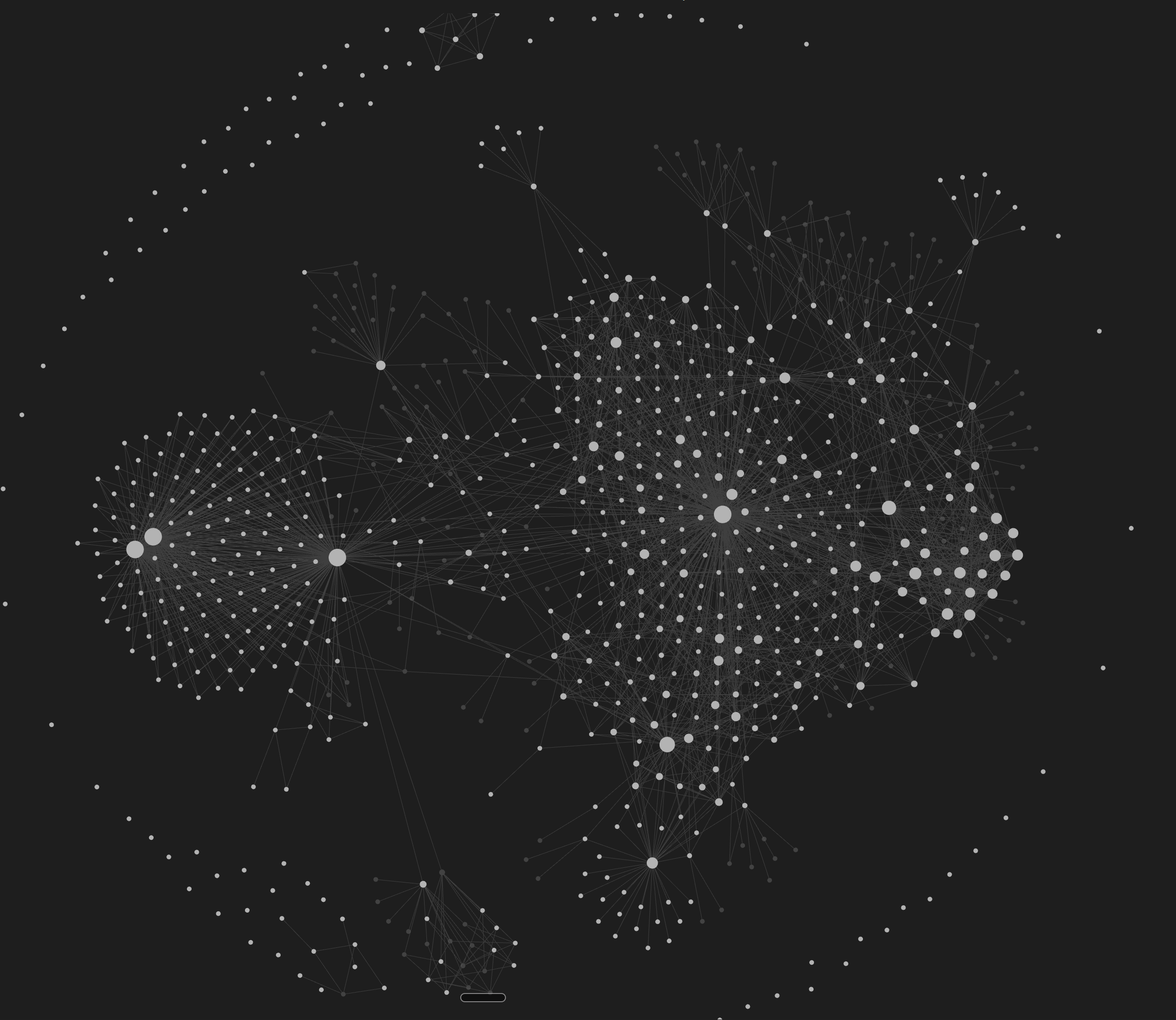

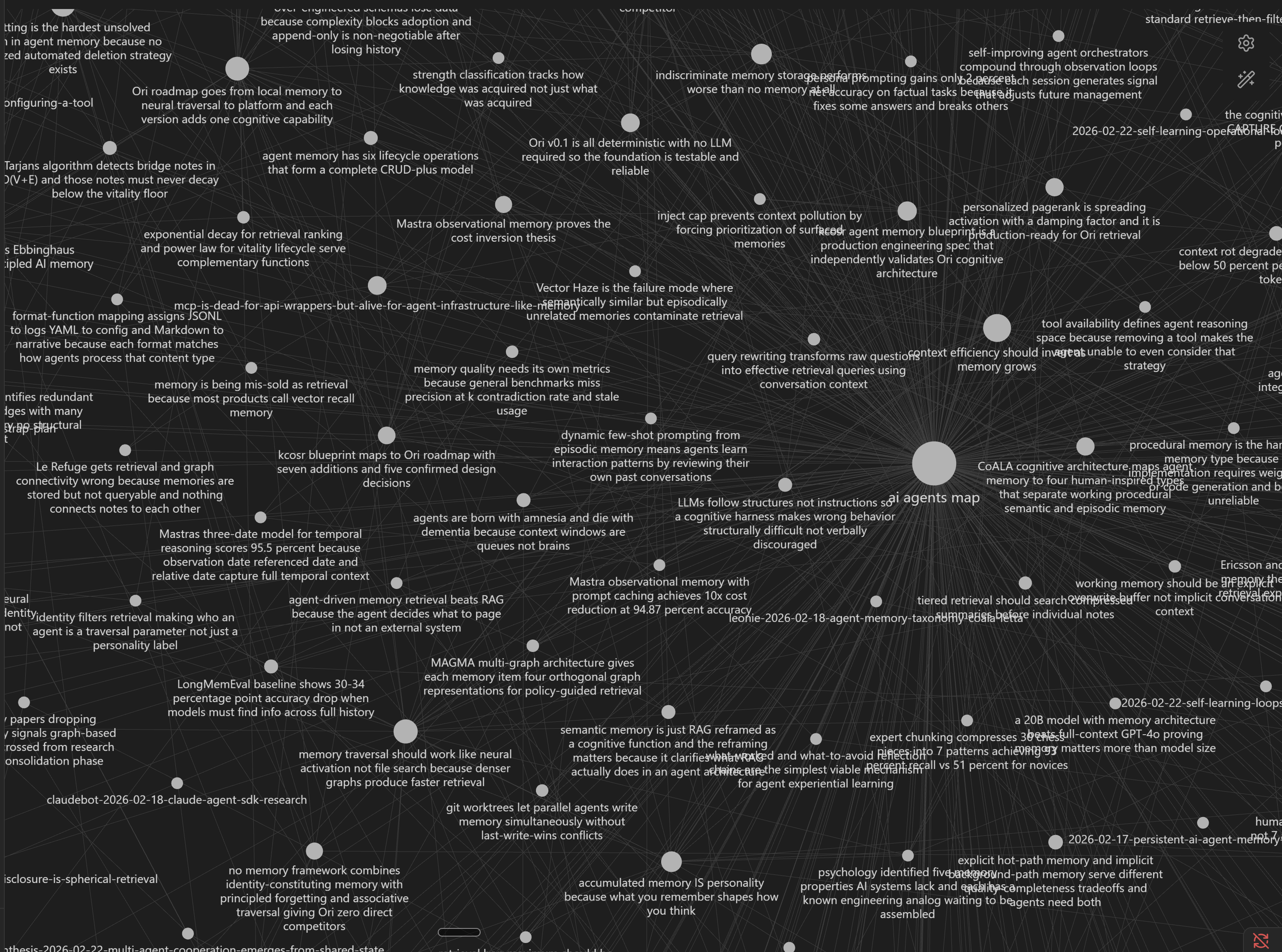

Knowledge Graph as Prerequisite

The database becomes a knowledge graph. Notes become nodes where each piece of information has relativity to other pieces of information, similar to neurons in the brain. Activating one, activates others at varying levels.

RMH Enforces Three Constraints

CONSTRAINT 01

Retrieval must follow the graph.

When a note is retrieved, activation propagates along its edges to connected notes. The system cannot return isolated results. It is forced to return clusters of related knowledge, as the graph encodes how each piece of information relates to every other piece it touches.

CONSTRAINT 02

Unresolved queries must recurse.

When a retrieval pass does not fully resolve the query, the system generates sub-queries targeting what is missing. Each sub-query enters the graph from new entry points and runs its own pass. Results accumulate. After each pass, the system measures whether new relevant information is still being found. When it isn't, the system stops.

CONSTRAINT 03

Every retrieval must reshape the graph.

When a note is accessed, connected notes within two hops receive a vitality boost that decays with distance (spreading activation). When new knowledge references a previously retrieved note, that note gets an additional boost. Notes never retrieved or cited decay on a power-law curve consistent with the Ebbinghaus forgetting curve. The graph is not allowed to be static. It strengthens from use, prunes from neglect, and develops expertise the way a brain does.

Any framework or system that retrieves isolated notes, answers in a single pass, or treats memory as static storage is not RMH.

Ori Mnemos: RMH-Powered, Open Source Memory Architecture for AI Agents

Ori Mnemos is the first implementation of Recursive Memory Harness.

The AI world is converging on memory and context efficiency as the bottleneck. Your agent's memory, your LLM's memory, is exponentially increasing in value. We are already seeing implementations where agents are sharing knowledge and learning from each other across platforms, decentralized or not.

The default architecture in AI memory is cloud-hosted and API-gated — your agent's knowledge stored on someone else's servers, billed monthly, with no performance advantage over local alternatives.

The AI landscape is the wild west. Norms are being created and systems are being entrenched. Ori started as a philosophical endeavor to nip this in the bud before it becomes the standard.

The question: how can you build a memory architecture that performs at the level of cloud-dependent systems while keeping every piece of data yours?

The answer was a folder of markdown files connected by wiki-links, versioned with git, readable with your eyes. No database. No cloud. No vendor between you and your memory. That experiment produced a system that compresses the entire memory stack — Redis, Qdrant, cloud infrastructure — into local files that perform comparably on standard benchmarks with zero dependencies.

The system runs through MCP and CLI, with the CLI being finalized in the coming days. Any model connects to Ori and receives recursive graph navigation without writing code, without managing state. Open source under Apache 2.0. npm install ori-memory.

I use it in my personal workflow. Over 500 notes spanning six project domains. Over time my AI agent has become a personalized, specialized assistant that knows my work across all of them. It finds connections between projects I didn't draw myself. It surfaces decisions I made months ago when they become relevant again. All of it saved on my computer. All of it mine.

Benchmarks

HotpotQA tests multi-hop question answering — each question requires finding and combining information from exactly two different documents to produce an answer. Head-to-head on the same 50 questions, same scoring:

| Metric | Ori (RMH) | Mem0 |

|---|---|---|

| R@5 | 90.0% | 29.0% |

| F1 | 52.3% | 25.7% |

| LLM-F1 (answer quality) | 41.0% | 18.8% |

| Speed | 142s | 1347s |

| API calls for ingestion | None (local) | ~500 LLM calls |

| Cost to run | Free | API costs per query |

| Infrastructure | Zero | Redis + Qdrant |

Recursive Language Models proved that recursion over context is worth more than a bigger context window.

Recursive Memory Harness proves that relativity in memory outperforms isolated retrieval.

Ori Mnemos is the first implementation, open source, zero infrastructure, performing at the level of cloud-dependent systems on standard benchmarks.

RMH is one step closer to active memory recall.